Table of Contents

- Overview

- Role

- Problem

- Goal

- Solution

- Technical Implementation

- Challenges and Learnings

- Final Thoughts

Overview

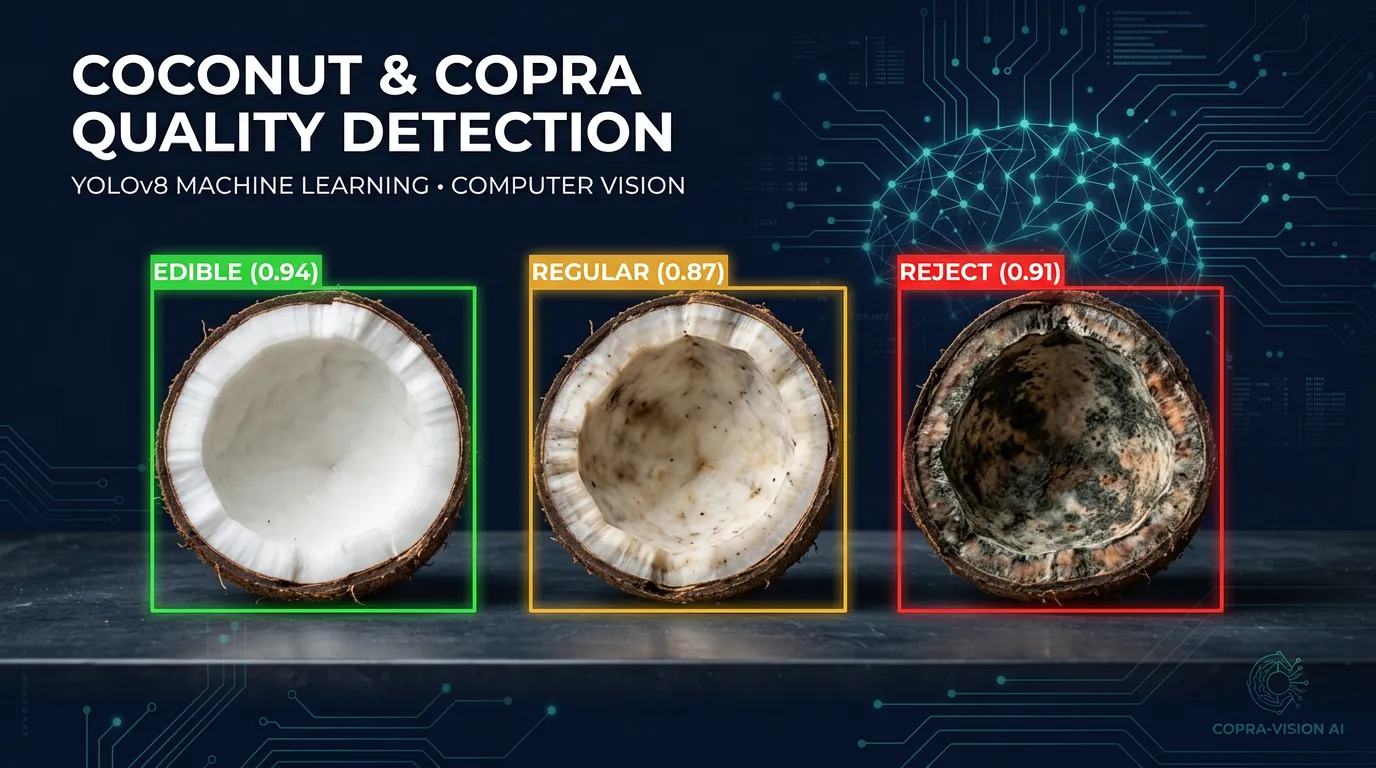

Coconut and Copra Quality Detection is a computer vision system developed as part of research at Universitas Islam Negeri Jakarta. The project applies transfer learning with YOLOv5 and YOLOv8 to automatically classify the quality of coconut and copra (dried coconut meat), achieving over 90% accuracy. A desktop GUI built with Tkinter and OpenCV integrates the models into an industrial pipeline via serial communication with embedded microcontrollers.

Role

Machine Learning Engineer & Software Engineer

Problem

Manual quality inspection of coconut and copra in agricultural and industrial settings is:

- Slow and inconsistent, relying on subjective human judgment.

- Prone to error under high-throughput factory conditions.

- Difficult to integrate into automated sorting and processing machinery.

- Lacking in real-time feedback for operators on the production floor.

Goal

- Build accurate object detection models capable of classifying coconut and copra quality in real time.

- Develop a user-friendly GUI for operators to monitor detection results live.

- Integrate the system with industrial machines via embedded microcontrollers.

- Achieve detection accuracy above 90% to meet industrial reliability standards.

Solution

Detection Models

- YOLOv5 (Coconut-Copra-YOLOv5-GUI): Initial model trained with a custom dataset using infrared sensor integration and dual serial COM ports for ESP32/STM32 communication.

- YOLOv8 (Copra-YOLOv8-GUI): Upgraded model using YOLOv8 instance segmentation trained over 100 epochs, offering improved accuracy and segmentation masks.

Desktop GUI

- Built with Tkinter and OpenCV for real-time video stream display with detection overlays.

- Displays confidence scores, class labels, and detection bounding boxes/segmentation masks.

- Provides operator controls for starting/stopping detection and serial communication.

Industrial Integration

- PySerial communication with ESP32/STM32 microcontrollers.

- Triggers physical sorting mechanisms based on quality classification output.

Technical Implementation

Transfer Learning Pipeline

- Custom dataset collected and annotated for coconut and copra quality classes.

- Fine-tuned YOLOv5 and YOLOv8 pre-trained weights on domain-specific data.

- Training conducted on GPU with CUDA acceleration (RTX-compatible).

- Achieved >90% mAP on validation set across both model versions.

Hardware Integration

- Infrared sensors (YOLOv5 version) for object presence detection.

- Serial commands sent to microcontroller to actuate sorting mechanisms.

Challenges and Learnings

- Dataset Collection: Acquiring and annotating a sufficiently diverse dataset of coconut and copra samples required significant fieldwork.

- Real-time Performance: Optimizing inference speed to keep up with conveyor belt throughput required model pruning and GPU acceleration.

- Serial Communication Reliability: Handling dropped serial packets and timing issues between the GUI and microcontroller required robust error handling and retry logic.

- Environment Variability: Lighting changes on the production floor affected detection confidence; addressed through data augmentation during training.

Final Thoughts

- Transfer Learning Accelerates Development: Leveraging pre-trained YOLO weights drastically reduced the training time needed to reach production-ready accuracy.

- YOLOv8 Outperforms YOLOv5: The segmentation capability of YOLOv8 provided more precise quality boundaries, improving classification in edge cases.

- End-to-End Integration Matters: Bridging the gap between ML model and physical machine was as challenging as building the model itself.