Table of Contents

- Overview

- Role

- Problem

- Goal

- Solution

- Technical Implementation

- Team

- Challenges and Learnings

- Final Thoughts

Overview

Patuli (Pahlawan Tuli) is an Android application designed to help users learn and communicate using Bisindo (Indonesian Sign Language) gestures through integrated machine learning. Built as a capstone project for Bangkit Academy 2023 — a program led by Google, GoTo, and Traveloka — the app brings real-time sign language recognition to mobile devices, improving accessibility for the deaf and hearing-impaired community in Indonesia.

Role

Machine Learning Engineer

Problem

Communication barriers between hearing and hearing-impaired individuals remain a significant accessibility challenge in Indonesia:

- Most Indonesians are unfamiliar with Bisindo, limiting meaningful interaction with the deaf community.

- Existing sign language learning resources are scarce, static, and not interactive.

- No accessible mobile tool existed for real-time Bisindo gesture translation in the Indonesian context.

Goal

- Build an accurate Bisindo gesture recognition model deployable on Android devices.

- Create an interactive learning platform that teaches sign language through modules and gamification.

- Enable real-time translation of Bisindo movements to bridge communication gaps.

- Make the application lightweight enough to run on-device via TFLite without requiring an internet connection.

Solution

Core Features

- Learning Modules: Structured lessons covering Bisindo alphabets, numbers, and common words.

- Gamification: Interactive challenges to keep users engaged and motivated throughout their learning journey.

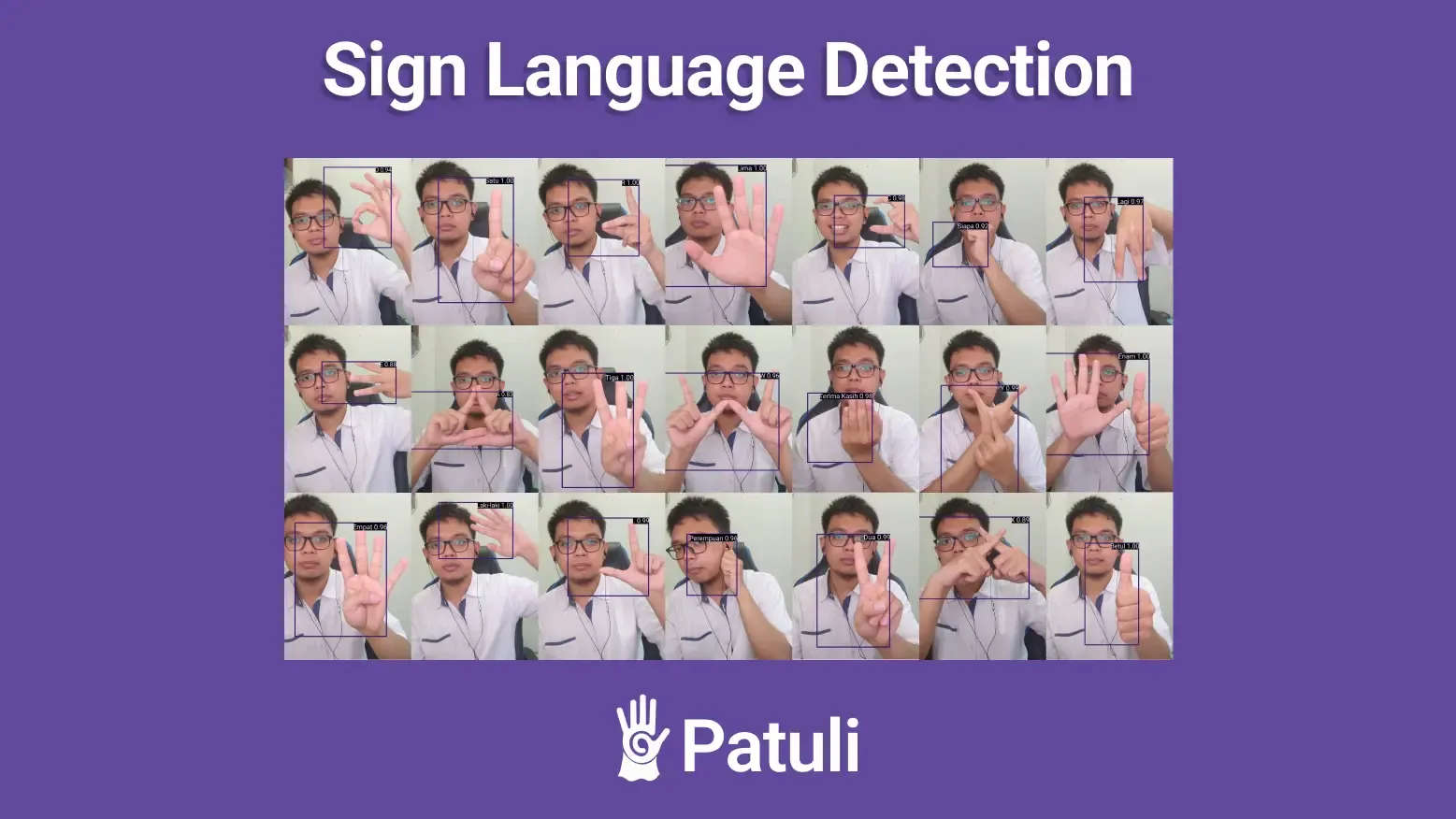

- Real-Time Translation: Live camera-based gesture detection that translates Bisindo signs instantly, enabling direct communication with hearing-impaired individuals.

Repositories

- Machine Learning: Patuli-ML

- Mobile Development: Patuli-Android

- Cloud Computing: Patuli-Cloud

Technical Implementation

Machine Learning

- Base Model: MobileNetV2 pre-trained on ImageNet, fine-tuned via transfer learning on a custom Bisindo gesture dataset.

- Accuracy: Achieved over 80% accuracy on the validation set across gesture classes (alphabets, numbers, words).

- Deployment: Model exported to TFLite format for efficient on-device inference on Android.

- Framework: TensorFlow / Keras for training; TFLite for mobile deployment.

Android Integration

- TFLite model integrated directly into the Android app for real-time camera-based inference.

- No network call required for gesture recognition — fully on-device processing ensures low latency and offline capability.

Cloud Computing

- Backend services deployed to support user authentication, progress tracking, and learning module content delivery.

Team

Team ID: C23-PS037 · Bangkit Academy 2023

| Name | Learning Path |

|---|---|

| Ammar Sufyan | Machine Learning |

| Fauzan Farhan Antoro | Machine Learning |

| Belvin Shandy Aurora | Machine Learning |

| Benidiktus Valerino Gozen | Mobile Development |

| Vincentius Agung Prabandaru | Cloud Computing |

| Muhammad Imam Alif | Cloud Computing |

Challenges and Learnings

- Dataset Quality: Collecting a consistent, well-lit, and diverse dataset for Bisindo gestures was the most time-intensive part of the project. Variability in hand sizes, angles, and lighting required extensive augmentation.

- Model Size vs. Accuracy Trade-off: MobileNetV2 was chosen specifically for its balance between accuracy and model size, making it feasible for TFLite deployment without significant quality loss.

- Real-Time Performance: Optimizing inference speed on mobile required quantization and careful post-processing to maintain smooth frame rates during live camera detection.

- Cross-team Coordination: Collaborating across three learning paths (ML, Android, Cloud) under a tight capstone deadline required clear API contracts and regular syncs.

Final Thoughts

- Transfer Learning Accelerates Delivery: Starting from a pre-trained MobileNetV2 backbone allowed the team to reach production-ready accuracy within the project timeline without training from scratch.

- On-Device AI Improves Accessibility: Running inference locally means the app works without internet, which is critical for users in areas with limited connectivity.

- Meaningful Impact: Building a tool that tangibly helps the hearing-impaired community made this one of the most rewarding projects to work on.